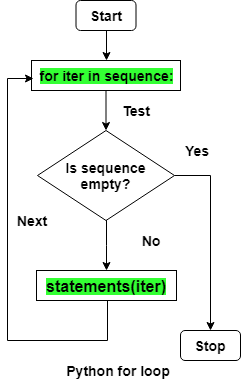

In this article I will discuss the most popular vectorized functions available in numpy library, compare the speeds in my local computer when compared to traditional for-loops, and how we can vectorize a custom function Vectorization of transformation operations CPUs use SIMD (Single Instruction Multiple Data) to achieve faster speeds taking advantage of more number of cores and parallelism. Vectorization essentially means that function is now applied paralelly on many values of the iterable unlike traditional for-loops. Numpy’s vectorized functions don’t perform explicit type checks for each iteration saving valuable CPU and GPU resource time.

Type checking during execution is a time consuming process and is the reason behind python’s slow performance when compared to C, especially in for-loops. C is a statically typed programming languages, so the type checking happens during compile time. Python is a dynamically typed programming language, meaning that type checking of data happens at runtime.

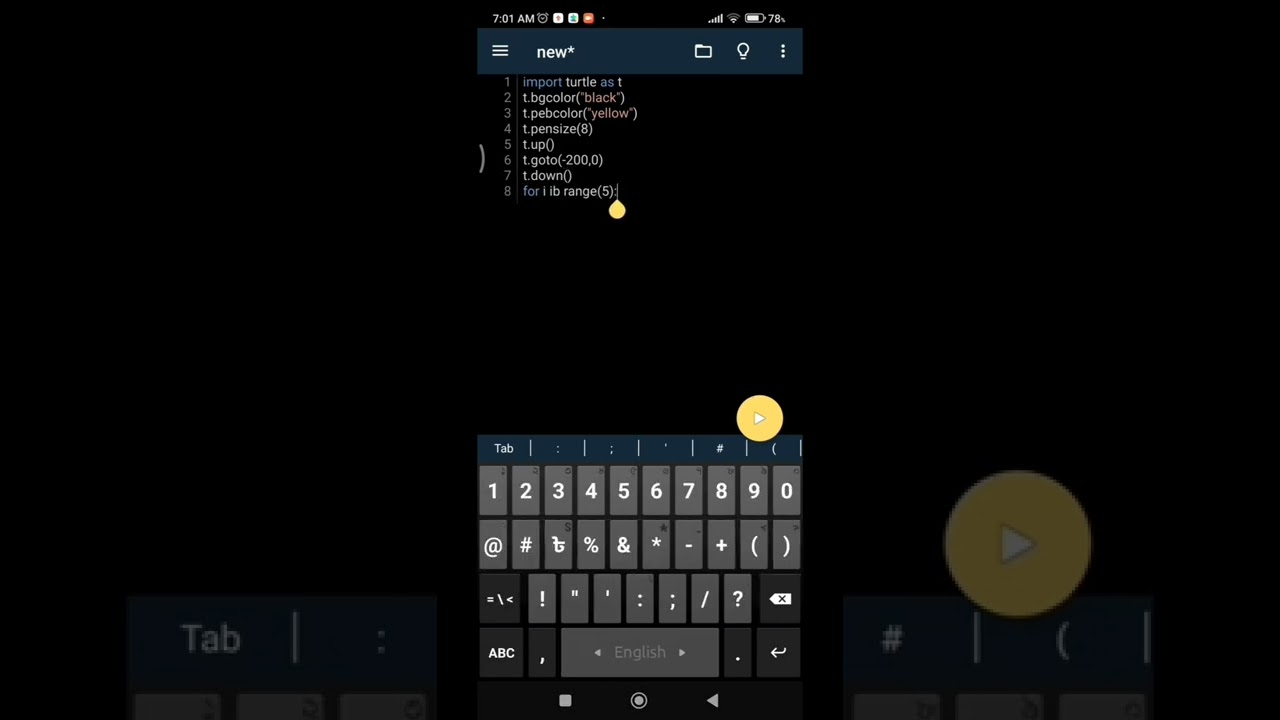

#PYTHON VECTORIZE FOR LOOP CODE#

Vectorization is what gives python performance speeds parlelling C programming language, literally, because numpy’s vectorized functions uses optimized precompiled C code under the hood. Also in data science applications while pre-processing the data and running feature generation functions or cleaning operations through out the records of the dataset can be time consuming for large number of records. When dealing with huge amounts of data, for example,an application that creates dashboards for large datasets given by the user, it is important to note that data processing is the bottleneck in serving the user fast responses. To check vector capabilities of your CPU you can type lscpu.Many internet companies nowadays use python in their backend server applications. There is also linear vectorizer (in llvm it is called SLP vectorizer) which is searching for similar independent scalar instructions and tries to combine them. In general, not only loops can be vectorized.

Vector registers for those instruction sets are described here. Historically, Intel has 3 instruction sets for vectorization: MMX, SSE and AVX. Vector operations can give significant performance gains but they have quite many restrictions which we will cover later. Amount of instructions is smaller in vector version, although all vector instructions have bigger latency than scalar counterparts. If there are not enough elements in the vector for choosing vector version, scalar version will be taken. If you will analyze assembly carefull enough you will spot the runtime check which dispatch to those two versions. So here you can see that vector version crunches 8 integers at a time (256 bits = 8 bytes). Vmovdqu ymmword ptr, ymm1 # storing result Vpsrld ymm1, ymm1, 1 # vector shift right. Vpsubd ymm1, ymm1, ymm0 # ymm0 has all bits set, like +1 Vector version vmovdqu ymm1, ymmword ptr # loading 256 bits from rhs Mov dword ptr, edx # store result to lhs

Scalar version mov edx, dword ptr # loading rhs I turned off loop unrolling to simplify the assembly and -march=core-avx2 tells compiler that generated code will be executed on a machine with avx2 support. Lets compile it with clang with options -O2 -march=core-avx2 -std=c++14 -fno-unroll-loops. Void foo ( std :: vector & lhs, std :: vector & rhs )